Reviewing the GDAP Wizard in Lighthouse

Hey folks! In today’s article, we will be taking a closer look at Granular Delegated Admin Permissions or GDAP. You can think of this feature as providing similar functionality to Privileged Identity Management (PIM), including “Just-in-Time” (JIT) access, but specifically with regard to your partner tenant as you “reach across” into customer tenants in order to manage their subscriptions and services. If you are a Managed Services Provider, and you are constantly switching between customer tenants throughout the day, then this article is for you!

In The Days of Old

In the past, Partner Center would have been your “gateway” to managing customer tenants. We had the ability to establish relationships with our customers via Delegated Admin Permissions (DAP). To say the least, this was a rather clunky experience that left a lot to be desired. For example, accessing customer tenants from your own partner tenant was fraught with known issues and limitations that were not well documented anywhere (therefore most of us still just signed into the customer tenant directly using different browser profiles or “private windows”). Plus, with the DAP relationship in place your native partner account would effectively have global administrator privileges to all tenants all the time.

The situation was less than ideal. Eventually we had Granular Delegated Admin Permissions or GDAP, which goes a long way toward fixing some of these problems. For example, GDAP can be leveraged to provide Just-In-Time (JIT) access to customer tenants, so that you can elevate permissions only when you need to go execute changes, and that access is automatically time-bound (for example you can set it up to expire after 2 hours).

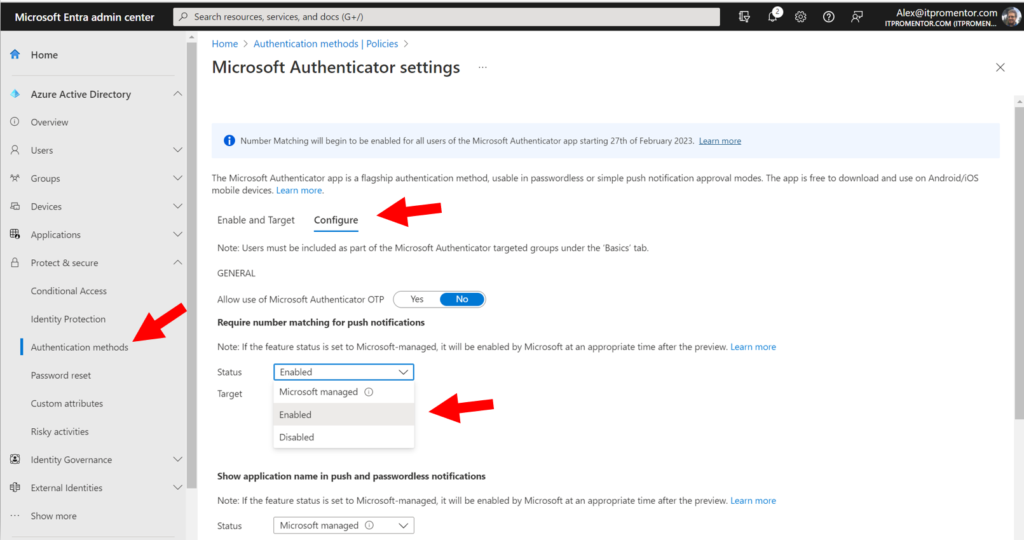

In order to take advantage of the JIT access capabilities, the partner tenant will need to have Azure AD Premium P2 licensing. In case you weren’t aware, Microsoft announced back in October 2021 that they would be giving away two years’ worth of AAD P2 subscriptions for Partners (and no, I am not sure if there are plans to extend this or not).

GDAP Wizard in Lighthouse

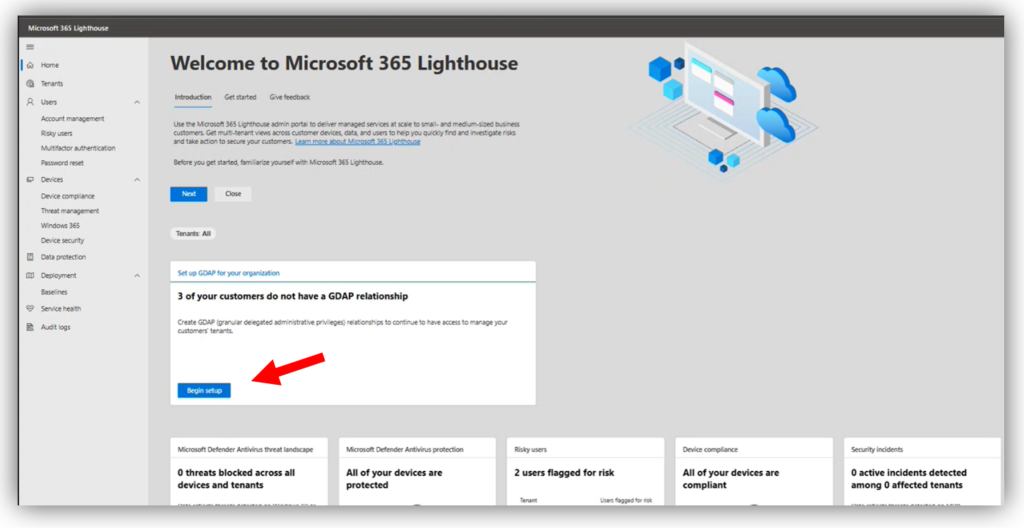

GDAP used to be pretty difficult to set up, but in recent months Microsoft 365 Lighthouse has made it much easier to establish GDAP relationships, or to convert existing DAP relationships. It is also worth mentioning that Microsoft 365 Lighthouse recently celebrated a birthday. If you haven’t looked at this free multi-tenant management tool yet, or if it has been a while since you’ve poked around in there, I would encourage you to check it out for the first time, or to revisit it again. It has come a long way recently, and it is certainly the best place to set up your GDAP relationships.

Note: In the official documentation it is (incorrectly) stated that you need to be a Cloud Solutions Provider (or CSP) to use Lighthouse. This is not 100% accurate; many MSPs are not technically CSPs but they likely have access to Partner Center. Some of them sell licenses through a distributor such as Ingram Micro or Pax 8, others just serve customers who buy direct from Microsoft. No matter how you are set up in your own practice, you can get into Lighthouse with nothing but Partner Center access and your existing DAP customer relationships which exist therein.

Before you start, make sure you have a Global administrator account in your partner tenant that is also assigned to the Admin agent role in Partner center, as these permissions are pre-requisites to being able to configure GDAP via Lighthouse. Using this account, navigate to https://lighthouse.microsoft.com and find the GDAP wizard right on the home page.

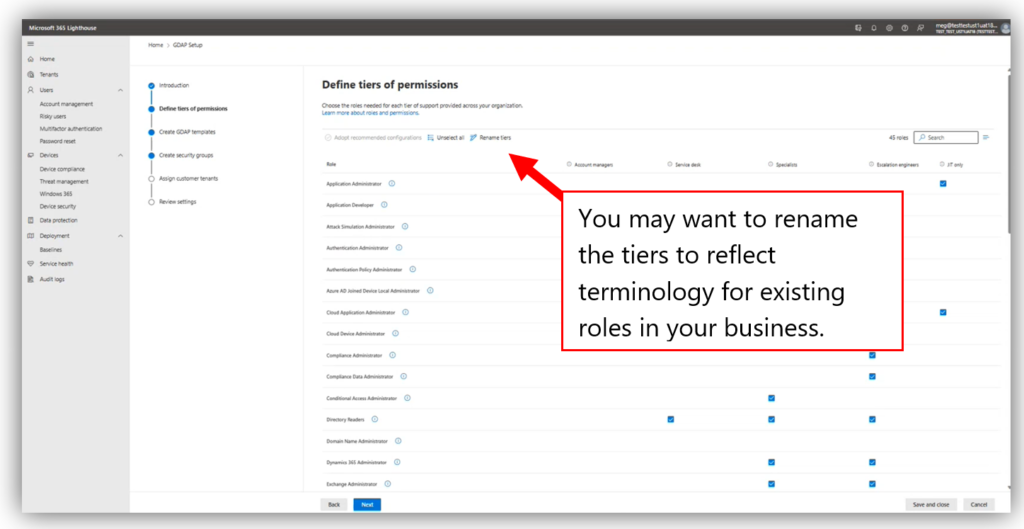

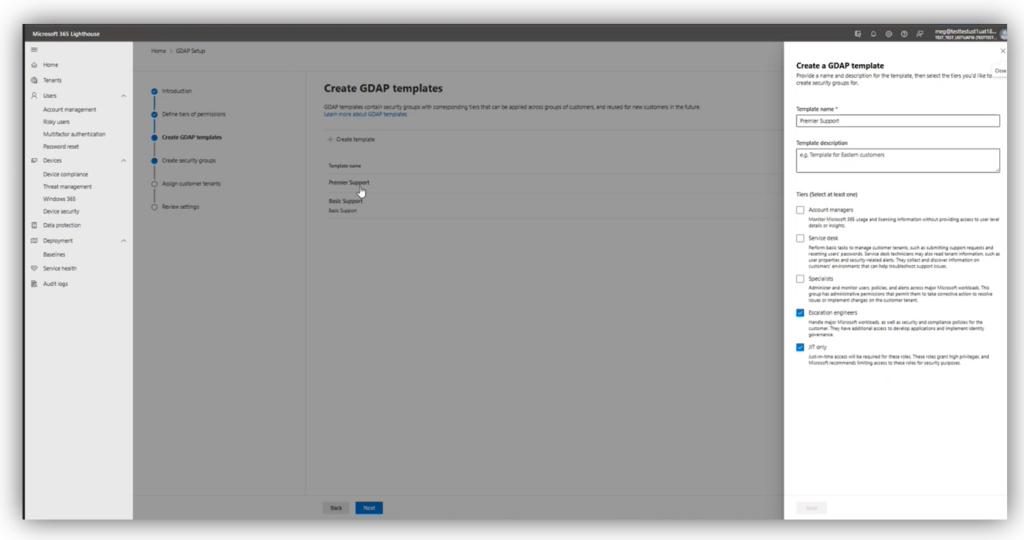

One of the great things about this tool is that it comes with pre-defined “tiers” that you can use straight out of the box, or you can customize them to your liking.

One of the great things about this tool is that it comes with pre-defined “tiers” that you can use straight out of the box, or you can customize them to your liking.

Tiers are collections of Azure AD roles that are selected for specific job functions like Account manager, Service desk, or Escalation engineer. There is also a “JIT-only” tier which would include your high impact roles such as Global administrator. More on the JIT role later. Note that you can also rename the tiers if you want to use different terminology in your own practice, such as Level 1 tech, Level 2 tech, etc.

The wizard will help you build “templates” that you can then assign to one or more customers. Each template can contain one or several of the tiers. For example, you may have “fully managed” customers who require, at different times, a variety of these tiers all the way up to Escalation engineers and the JIT-only role. But you might also have customers who are not fully managed, or only require Account management, or Service desk roles. In this case you could have two templates and apply only the roles you need to each respective group of customers.

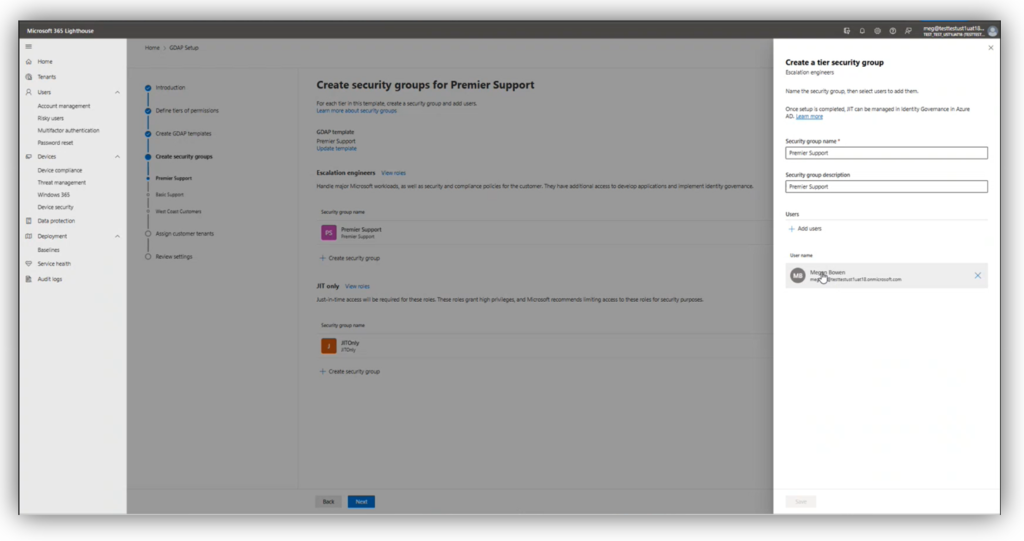

For each role, you need to create a security group in your partner tenant. Note: I have experienced at times that adding users is difficult from Lighthouse; for example, sometimes it cannot see all of my users, so I will need to go edit these groups later in my Azure AD or Microsoft 365 admin centers. I am guessing there are still some bugs being worked out here.

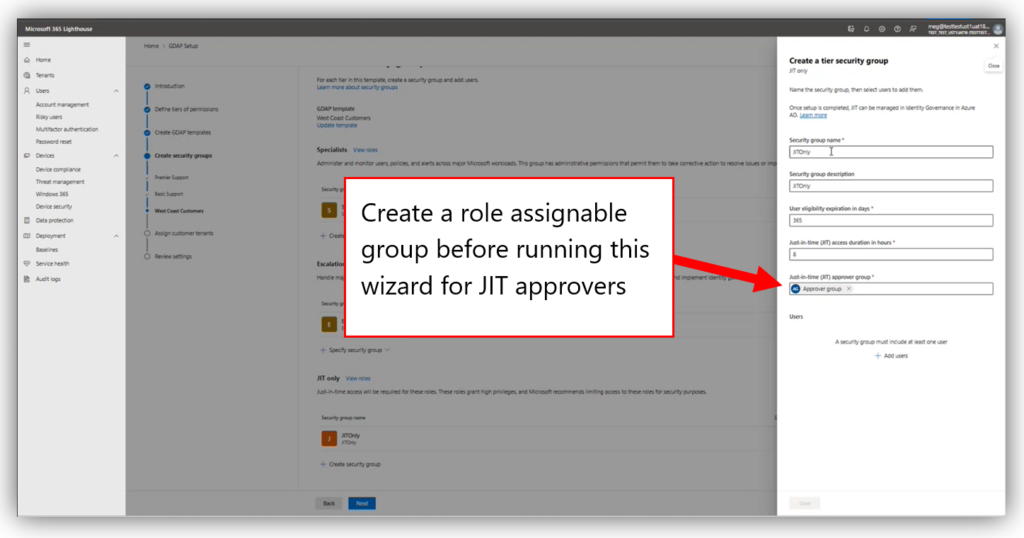

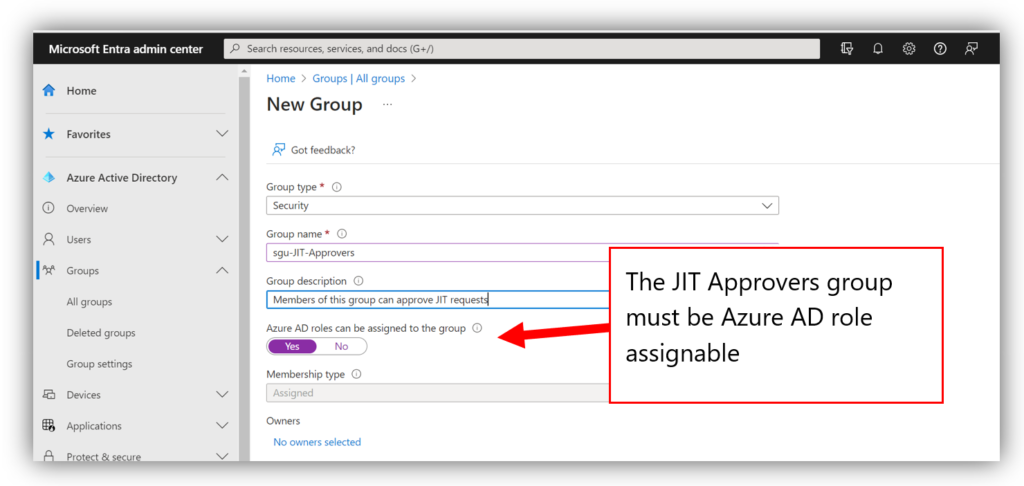

For the JIT-only role you actually need to specify two security groups: the “JIT Eligible” group itself (i.e., those users who can be elevated to global admin in your customers’ tenants) and the “JIT Approver” group (those responsible for approving any requests to elevate permissions). Be sure to create your JIT Approver group in advance, before you run the wizard (the other groups can be created within the wizard). Also, make sure that this group is configured to be Azure AD role assignable.

For the JIT-only role you actually need to specify two security groups: the “JIT Eligible” group itself (i.e., those users who can be elevated to global admin in your customers’ tenants) and the “JIT Approver” group (those responsible for approving any requests to elevate permissions). Be sure to create your JIT Approver group in advance, before you run the wizard (the other groups can be created within the wizard). Also, make sure that this group is configured to be Azure AD role assignable.

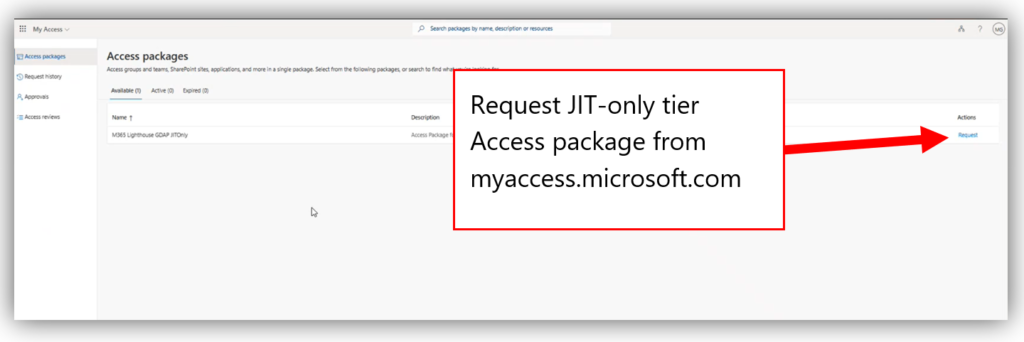

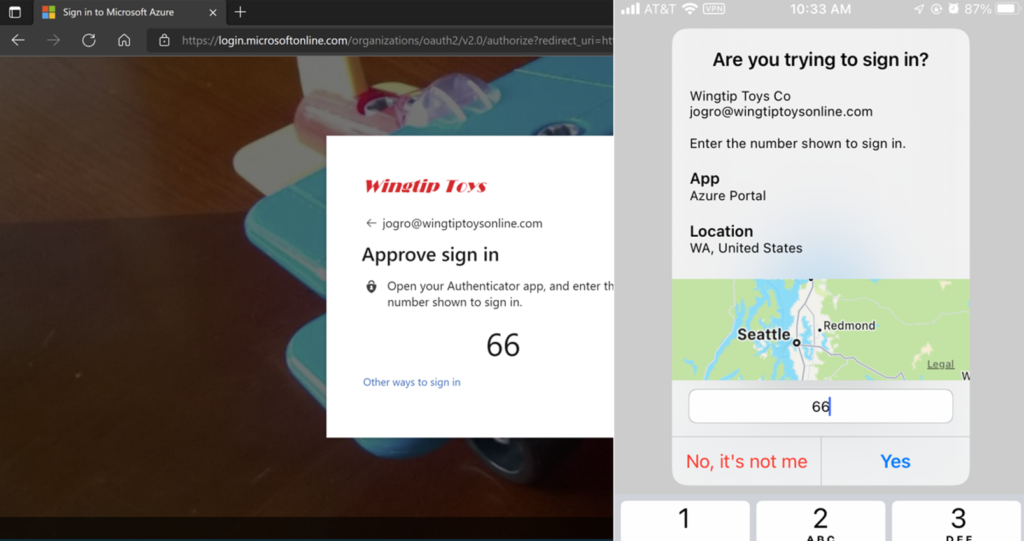

Once you assign customers to a template, they are converted from DAP to GDAP. To elevate into the JIT-only role, eligible users must navigate to https://myaccess.microsoft.com and request role elevation for the desired access package.

Once you assign customers to a template, they are converted from DAP to GDAP. To elevate into the JIT-only role, eligible users must navigate to https://myaccess.microsoft.com and request role elevation for the desired access package.

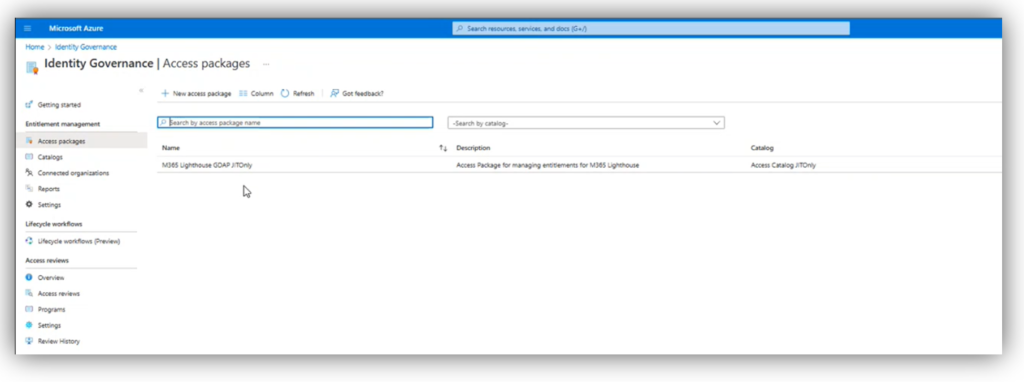

The approvers will then be able to answer these requests from the same “myaccess” portal under Approvals. Once approved, the privileged roles will be activated for the duration specified in the wizard. This process is not using PIM but rather it is leveraging Access packages from Azure AD Identity Governance (see Entitlement Management).

The approvers will then be able to answer these requests from the same “myaccess” portal under Approvals. Once approved, the privileged roles will be activated for the duration specified in the wizard. This process is not using PIM but rather it is leveraging Access packages from Azure AD Identity Governance (see Entitlement Management).

Potential downsides

Now one of the criticisms I have of this tool is that every customer who is attached to a template is equally impacted by the JIT escalation requests. There is only one access package created, so when you elevate an account to the JIT-only role, that account will gain superuser privileges in every tenant attached to the template for the time period specified. This implies that if you wanted to isolate your JIT access requests (which I think would be the most ideal scenario), you would need to create a unique JIT-only template for each customer.

That might be okay if you are only managing a handful of customers, but it makes this wizard a heck of a lot harder to use if you manage dozens or hundreds of customers. I would really like to see this improved in a future update, but for now I just want you to understand what the limitation is. Most of us will probably not want to run this wizard 100+ times and step through every customer every single time; it ends up (at least partially) defeating the purpose of the simplified wizard to begin with.

The other criticism I have is that we don’t (yet) have a great way to delete these relationships from Microsoft 365 Lighthouse. You can delete the templates, but this just removes the template object from Lighthouse itself, leaving all of the associated security groups, access packages, etc. in place. Therefore, to accomplish a “real” deletion, you would need to:

- Delete the template in Lighthouse

- Delete the corresponding access packages in Azure AD Identity Governance

- Delete the security groups in Azure AD

- Delete the GDAP relationship from Partner Center

But so far, these do seem to be the only real downsides to the solution Microsoft has come up with. At least we can say it is a good improvement on the previous architecture, where we only had global admin all the time. The questions that remain in my mind are:

- Will we be able to keep our Azure AD P2 licenses at no cost to the partner after the initial two years?

- Will we ever get the ability to request JIT elevation only for specific customers, or will it always remain “per template” (and if so, can we at least make it easier to clone templates or something, for applying per-customer JIT requests?)

- Let’s say we have to remove customers or even entire templates, which could mean we also want the corresponding access packages (and in some cases even the relationships) terminated as well; shouldn’t this “offboarding” process be a bit easier?

Time will tell! But otherwise, I am pleased with the progress that has been made to date. Good job, Lighthouse team!

This article was written by the new Bing chatbot.*

*…April Fools! This article, like all content on ITProMentor.com, was written by a Real Human™

The post Reviewing the GDAP Wizard in Lighthouse appeared first on ITProMentor.

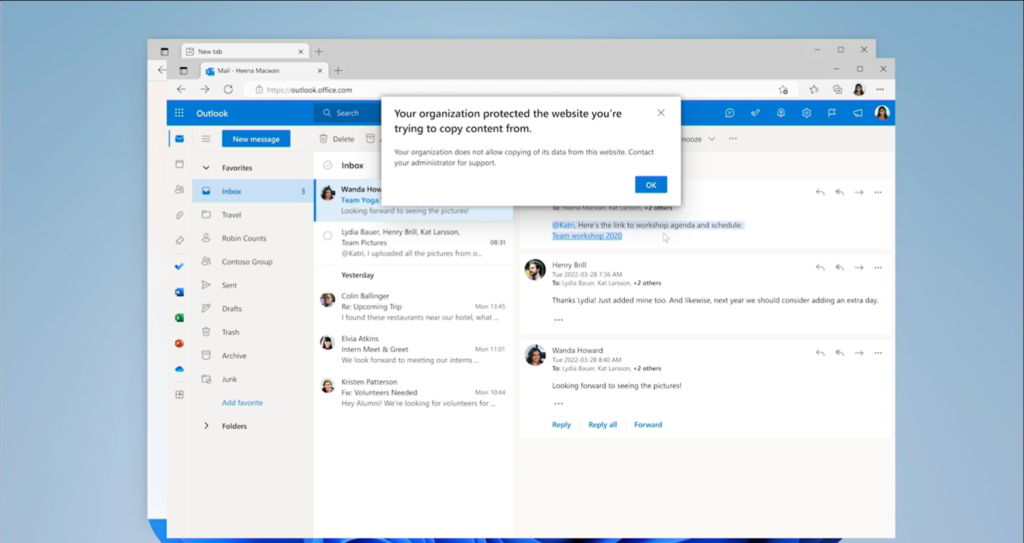

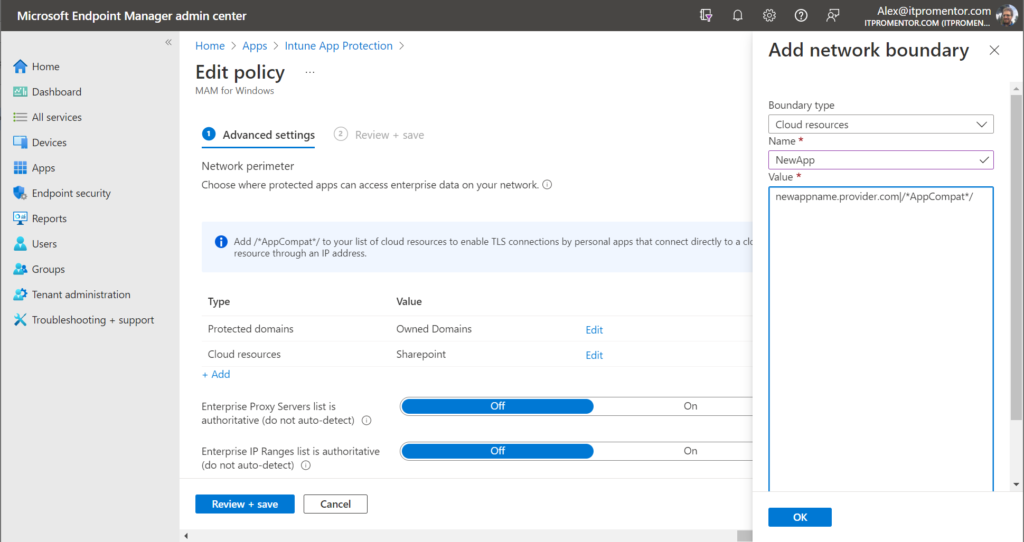

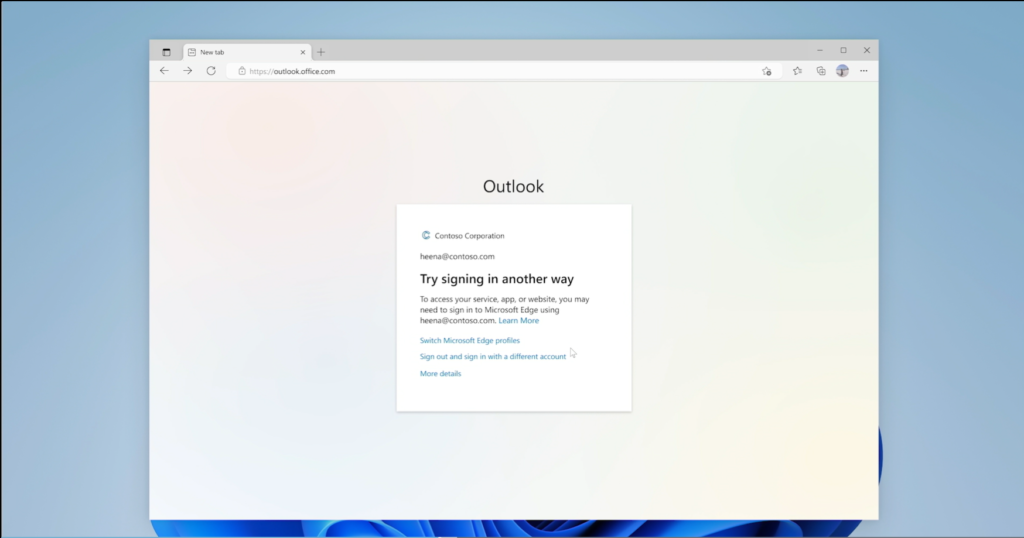

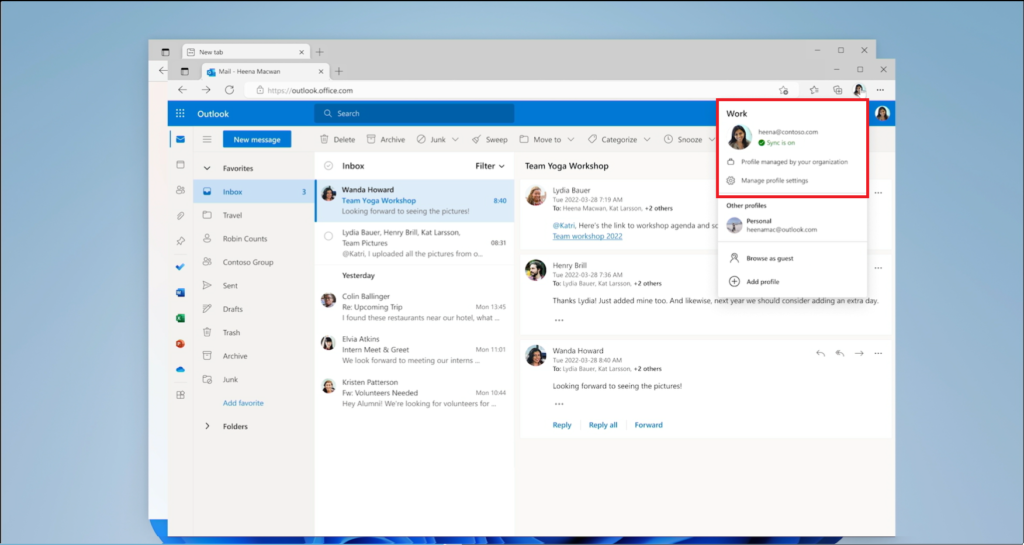

Clearly, we can see the user is now signed in with a corporate profile (and I suspect that this means any site the user visits under the corporate profile would be within the “corporate boundary,” without us having to manage a list of apps and websites in a “network boundary” within a policy somewhere).

Clearly, we can see the user is now signed in with a corporate profile (and I suspect that this means any site the user visits under the corporate profile would be within the “corporate boundary,” without us having to manage a list of apps and websites in a “network boundary” within a policy somewhere).